Behind an Interactive Video Wall

As technology evolves to enable more natural user interactions, the physical way we connect to devices is changing. Input accessories — like keyboards, remote controls, and even smartphone screens — will eventually become obsolete, first occupying landfills, and then, eventually, the shelves of Brooklyn antique shops. Interactive video walls, controlled through touch or hand gestures, are a great example of this evolution. They have a wide range of applications, and are taking root among forward-looking organizations. Plus, in our experience, we’ve found interactive video walls to be just plain fun for users — adding a gamification element to the process of information-gathering. Recently, we were fortunate to work on an interactive video wall for Coldwell Banker, and made some interesting discoveries along the way.

The Coldwell Banker Video Wall

The goal of the project was to wow prospective real estate brokers. After some collaborative brainstorming between Coldwell Banker and Modus, we decided to create an interactive video wall for the Coldwell Banker headquarters in New Jersey. The final concept was a giant interactive map that displays a range of real-time information including:

- Searches for real estate listings on www.coldwellbanker.com

- Tweets from real estate agents

- Office locations worldwide

- Many more capabilities that may be implemented over time

The result is a showpiece that weaves geography, broker perspective, and market demand into a dynamic, interactive experience. There’s a few different ways to implement a video wall like Coldwell Banker’s. Here are two of the big decisions we needed to make along the way, and how we evaluated the options.

Selecting the Technology

When we started building, the Microsoft Kinect motion sensor unit dominated the market and that was the natural choice. Microsoft also provides a software development kit (SDK) for the Kinect using Microsoft Visual Studio and C#, which has several built-in functions to recognize gestures and movements.

There are also many third-party SDK options. We evaluated several, and found that while some offered more functionality, they were all more work to implement and maintain. Microsoft’s SDK is a solid choice, especially as it has built up more capabilities over the years. However, depending on what you’re trying to build, it’s almost always worth it to evaluate third-party options. This is a burgeoning area of technology, and new APIs are being developed all the time.

Customizing the Experience

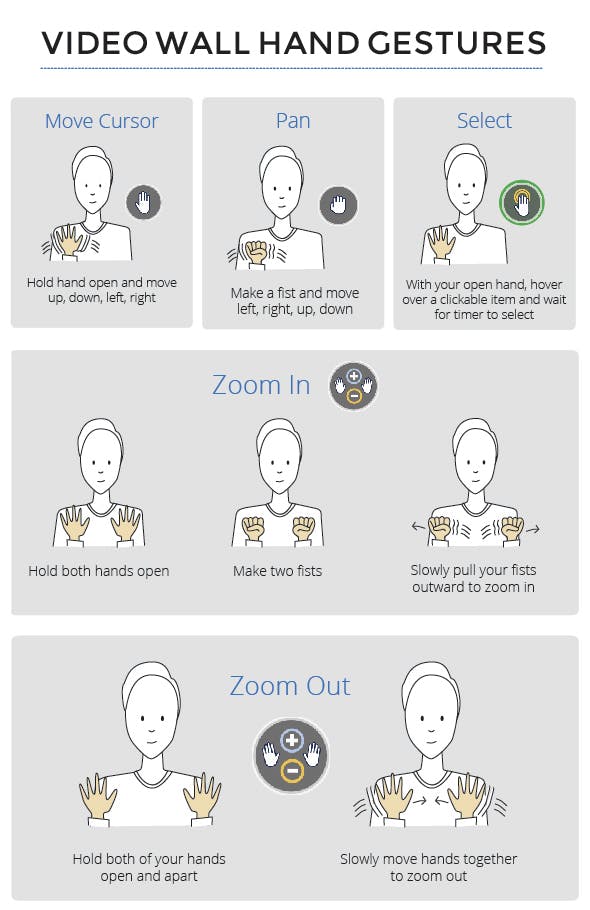

One of the biggest challenges was implementing gestures — a critical part of the user’s experience. Microsoft’s SDK provides functions for many gestures. But, again, depending on what you’re trying to build, you may need to hand-code for specific functions. At the time we were building the Coldwell Banker video wall, Microsoft’s SDK didn’t provide functionality for zooming in and out, so we had to do a lot of low-level hand-coding to create that interaction for the user (which turned out to be a bit more tedious than we originally anticipated!).

Experimenting with this new technology was exciting — and the process of actually implementing a project with it was enlightening. The Kinect also has the capability for facial gesture recognition and voice recognition, so there’s a lot more to explore. And we expect to see more companies embrace this technology as it continues to advance!